diffray vs OpenMark AI

Side-by-side comparison to help you choose the right tool.

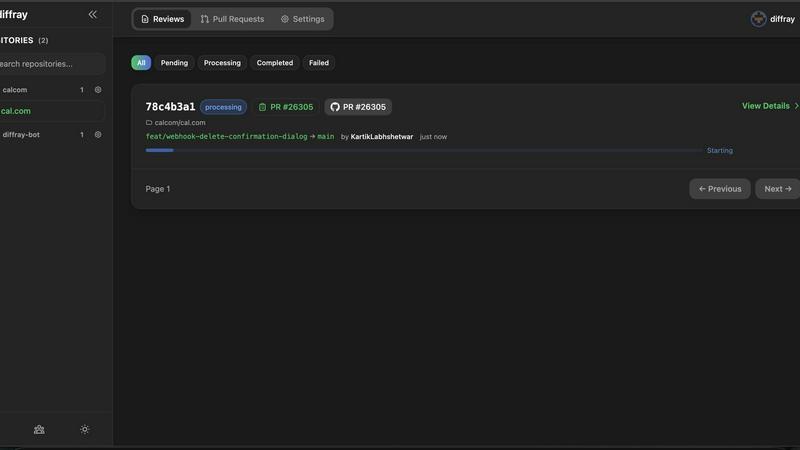

diffray

Diffray uses 30 specialized AI agents to catch real bugs in your code, not just nitpicks.

Last updated: February 28, 2026

OpenMark AI lets you effortlessly benchmark over 100 LLMs on your specific tasks, comparing cost, speed, quality, and stability in real-time.

Last updated: March 26, 2026

Visual Comparison

diffray

OpenMark AI

Feature Comparison

diffray

Multi-Agent Specialist Architecture

This is the core genius of diffray and what sets it lightyears apart. The platform employs over 30 distinct AI agents, each meticulously trained and optimized for a specific domain like security (OWASP Top 10, dependency vulnerabilities), performance (memory leaks, inefficient algorithms), concurrency (race conditions, deadlocks), and codebase consistency. This means a security expert agent scrutinizes your code for security flaws, while a separate performance expert analyzes for bottlenecks, leading to profoundly deeper and more accurate analysis than any single-model tool can achieve.

Full-Repository Context Awareness

diffray doesn't just look at the patch in isolation—a fatal flaw of simpler tools. It intelligently pulls in and understands the full context of your repository. Agents can analyze how new changes interact with existing architecture, spot deviations from established patterns, and identify breaks in consistency that would be invisible when looking at a diff alone. This context turns superficial comments into genuinely insightful guidance that understands your project's unique landscape.

Low-Noise, High-Signal Feedback

By leveraging its team of specialists, diffray virtually eliminates the plague of generic, low-value comments. The feedback it generates is concise, professional, and directly actionable. It prioritizes critical issues that matter, suppressing the trivial nitpicks that waste time. The output feels like it was written by a seasoned senior engineer who knows what's important, not a robot on a linting spree.

Integrated Workflow & Team Metrics

diffray seamlessly integrates into your existing GitHub or GitLab workflow, posting comments directly on pull requests. Beyond individual reviews, it provides teams with valuable analytics and metrics, highlighting common vulnerability patterns, tracking review time savings, and offering insights into code quality trends over time. This turns code review from a reactive gate into a strategic tool for continuous improvement.

OpenMark AI

User-Friendly Task Configuration

OpenMark AI offers a simple yet powerful task configuration interface, allowing users to describe their benchmarking tasks effortlessly. Whether you're looking to test for classification, translation, or data extraction, this feature simplifies the setup process, making it accessible even for those with minimal coding experience.

Comprehensive Model Comparison

With OpenMark AI, you can test over 100 different models concurrently, giving you a broad perspective on which AI solution fits your specific needs. This comprehensive comparison allows users to evaluate performance metrics like accuracy and stability under various conditions, ensuring that you select the best model for your application.

Real-Time API Call Results

Say goodbye to outdated metrics and marketing fluff. OpenMark AI provides real-time results from actual API calls to models, ensuring that you are working with the most accurate performance data. This feature allows teams to assess how each model performs under identical conditions, enabling better-informed decisions.

No Setup Hassles

One of the standout aspects of OpenMark AI is its seamless user experience, which eliminates the need for API key configurations and complex setups. Users can dive straight into benchmarking without worrying about technical barriers, making it an ideal choice for teams looking to integrate LLMs quickly and efficiently.

Use Cases

diffray

Accelerating Pull Request Throughput for Fast-Moving Teams

For development teams pushing multiple merges per day, the PR review bottleneck is real. diffray acts as a first-pass expert reviewer available 24/7, instantly surfacing critical issues and leaving detailed, context-aware comments. This allows human reviewers to focus on higher-level architecture and logic, dramatically speeding up the entire cycle and getting features to production faster without sacrificing quality.

Upskilling Junior Developers and Enforcing Standards

diffray serves as an always-available mentoring tool for junior developers. By providing immediate, expert feedback on security practices, performance implications, and code style, it helps them learn best practices in real-time. Simultaneously, it acts as an unbiased enforcer of team and organizational coding standards, ensuring consistency across the entire codebase as the team grows.

Proactive Security and Compliance Auditing

Security can't be an afterthought. diffray's dedicated security agents continuously scan every pull request for vulnerabilities, misconfigurations, and compliance violations against standards like OWASP. This embeds security directly into the developer workflow (Shifting Left), preventing costly security bugs from ever reaching production and making audit trails a natural byproduct of development.

Legacy Code Modernization and Refactoring

When tackling a large, legacy codebase, understanding the impact of changes is daunting. diffray's contextual analysis is invaluable here. It can help identify how new refactoring efforts might break existing patterns, pinpoint hidden technical debt related to performance or concurrency, and ensure that modernization efforts don't inadvertently introduce new classes of bugs, making large-scale refactors safer and more predictable.

OpenMark AI

Model Selection for AI Features

When developing new AI features, teams can leverage OpenMark AI to compare different models, ensuring that they choose the one that best meets their requirements in terms of performance and cost. This process minimizes the risk of deploying a suboptimal model and enhances overall project success.

Pre-Deployment Validation

OpenMark AI serves as a valuable tool for validating models before they go live. By running benchmarks and analyzing performance metrics, teams can confirm that the selected model will deliver consistent and reliable results, reducing the likelihood of post-deployment issues.

Cost Efficiency Assessment

For organizations focused on maintaining budget constraints, OpenMark AI allows for a detailed analysis of cost per API call. This insight helps teams prioritize models that offer the best value for their specific tasks, ultimately leading to smarter financial decisions.

Consistency Testing in Outputs

OpenMark AI is essential for teams that require consistent output from language models. By benchmarking models against the same tasks multiple times, users can gauge how stable model performance is over repeated runs, ensuring they choose a model that delivers reliable results.

Overview

About diffray

Let's be brutally honest: most AI code review tools are a massive disappointment. They promise intelligent automation but deliver a firehose of generic, low-value comments that bury the real issues in a soul-crushing avalanche of noise. You end up spending more time dismissing false positives than you save. diffray is the tool that finally breaks this cycle. It’s a revolutionary AI-powered code review platform built on a fundamentally smarter architecture. Instead of relying on a single, generalist AI model trying to be an expert at everything, diffray deploys a curated team of over 30 specialized AI agents. Think of it as having a dedicated, world-class expert for security vulnerabilities, another for performance bottlenecks, another for concurrency pitfalls, and so on. This multi-agent system conducts deep, contextual investigations into your pull requests, understanding the full scope of your repository, not just the isolated diff. The result is exactly what development teams desperately need: a dramatic reduction in false positives, a significantly higher catch rate for critical, actionable bugs, and clean, professional feedback that genuinely respects a developer's time. It transforms code review from a tedious, time-sucking chore into a genuine quality accelerator. Teams report slashing their average PR review time from 45 minutes to just 12. If you're tired of the noise and ready for signal, diffray is the only tool you should be considering.

About OpenMark AI

OpenMark AI is a cutting-edge web application designed for task-level benchmarking of large language models (LLMs). This innovative tool allows users to articulate testing parameters in plain language, enabling simultaneous evaluation of multiple models within a single session. OpenMark AI provides invaluable insights into cost per request, latency, scored quality, and stability through repeated runs, ensuring that users can identify variance in model performance rather than relying on a single fortunate output.

Tailored for developers and product teams, OpenMark AI streamlines the model selection process before launching AI features. Its hosted benchmarking service eliminates the hassle of configuring various API keys, allowing users to focus on their testing without the technical overhead. By offering side-by-side results derived from actual API calls, OpenMark AI empowers users to make informed decisions based on real data rather than marketing claims. This platform is particularly beneficial for those who prioritize cost efficiency and consistency in output quality, making it an essential tool for pre-deployment assessments in AI projects.

Frequently Asked Questions

diffray FAQ

How is diffray different from GitHub Copilot or other AI coding assistants?

This is a crucial distinction. Tools like Copilot are primarily generative—they help you write new code. diffray is analytical—it reviews and critiques code that has already been written. Think of Copilot as a pair programmer helping you type, while diffray is the meticulous senior engineer reviewing the final pull request. They serve complementary but entirely different purposes in the development lifecycle.

Does diffray replace human code reviewers?

Absolutely not, and it doesn't try to. diffray's goal is to augment human reviewers, not replace them. It automates the tedious, repetitive parts of review (catching common bugs, enforcing style, basic security checks) so your human team can dedicate their valuable cognitive bandwidth to complex logic, architecture, design patterns, and mentorship—the things AI still cannot do well.

What programming languages and frameworks does diffray support?

Based on its described multi-agent architecture focused on universal concepts like security, performance, and concurrency, diffray is built to support a wide range of popular languages and frameworks. While the specific list isn't detailed in the provided context, its value comes from analyzing fundamental code quality and vulnerability patterns that transcend any single language. You should check their official documentation for the most current and detailed list of supported technologies.

How does diffray handle the privacy and security of our source code?

For any serious development team, this is the first question. While specific details aren't in the provided snippet, a professional tool like diffray would typically offer options for cloud-based processing with strong encryption and data residency controls, as well as potentially self-hosted or on-premise deployments for organizations with strict compliance requirements. You must review their official security whitepaper and data processing agreement for guarantees.

OpenMark AI FAQ

What types of models can I benchmark with OpenMark AI?

OpenMark AI supports a wide range of models, allowing users to test over 100 different LLMs tailored to various tasks, including classification, translation, and more.

Do I need to set up API keys to use OpenMark AI?

No, OpenMark AI eliminates the need for individual API key setups. Users can begin benchmarking immediately without the technical overhead of configuring multiple keys.

How does OpenMark AI ensure the accuracy of comparison results?

OpenMark AI provides side-by-side results based on real API calls to models, ensuring that you receive accurate and up-to-date performance data rather than relying on cached or promotional figures.

Is OpenMark AI suitable for non-developers?

Absolutely! The user-friendly interface and no-code approach make OpenMark AI accessible to non-developers, allowing anyone interested in AI model benchmarking to participate without requiring extensive technical knowledge.

Alternatives

diffray Alternatives

diffray is a specialized AI code review tool that stands apart in the crowded developer tools market. It belongs to the category of intelligent automation for pull requests, but its unique multi-agent architecture moves it beyond simple linting or generic AI suggestions. It’s for teams that want deep, contextual bug catching, not just surface-level nitpicks. Developers often search for alternatives for a few key reasons. Budget constraints or specific pricing models can be a factor, as can the need for integration with a particular tech stack or CI/CD platform. Some teams might prioritize a different feature balance, like extensive language support over deep specialization, or require a self-hosted solution for security compliance. When evaluating other options, look beyond the marketing hype. The core question is whether a tool reduces noise while catching critical issues. Prioritize solutions that understand your full codebase context, not just the diff. True value comes from actionable feedback that saves engineering time, not from generating an overwhelming volume of low-priority comments.

OpenMark AI Alternatives

OpenMark AI is a powerful web application designed for benchmarking over 100 large language models (LLMs) based on specific tasks. It enables users to evaluate various models in terms of cost, speed, quality, and stability, providing a comprehensive view of performance rather than relying on single outputs. This tool primarily serves developers and product teams who require thorough comparisons to make informed decisions before implementing AI features. Users often seek alternatives to OpenMark AI due to factors such as pricing, specific feature sets, or platform compatibility. It's essential to consider what aspects matter most for your use case, whether that’s the breadth of model support, ease of use, or any unique functionalities that align with your project’s needs. When selecting an alternative, prioritize tools that deliver accurate benchmarking, transparent pricing, and consistent performance metrics, ensuring they fit seamlessly into your development workflow.