Agenta vs Fallom

Side-by-side comparison to help you choose the right tool.

Agenta is an open-source platform that streamlines LLM development, enabling teams to collaborate and build reliable.

Last updated: March 1, 2026

Fallom provides real-time observability for LLMs, ensuring precise tracking, debugging, and cost management of AI.

Last updated: February 28, 2026

Visual Comparison

Agenta

Fallom

Feature Comparison

Agenta

Centralized Prompt Management

Agenta allows you to centralize all your prompts, evaluations, and traces in one platform. This eliminates the confusion of scattered files and improves accessibility for all team members, ensuring everyone is on the same page.

Automated Evaluations

With Agenta, you can create a systematic process to run experiments, track results, and validate every change made to your LLMs. This feature replaces guesswork with evidence by providing automated evaluations that help you understand what changes impact performance.

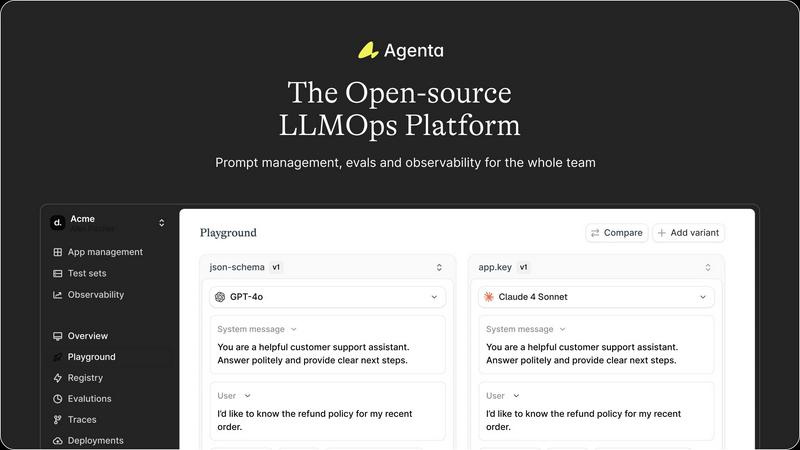

Unified Playground

The platform includes a unified playground where you can compare prompts and models side-by-side. This feature is invaluable for identifying the best-performing prompts and models, allowing for quick iterations and improvements.

Real-time Observability

Agenta provides tools for monitoring production systems and tracing every request. This feature allows you to gather user feedback efficiently, debug your AI systems, and detect regressions, ensuring a smoother user experience.

Fallom

Real-Time Observability

Fallom offers real-time observability for AI agents, enabling users to track every function call made by LLMs. This feature provides insights into timing, costs, and performance metrics, allowing teams to debug with confidence and optimize workflows effectively.

Cost Attribution

With Fallom's cost attribution capabilities, organizations can track spending on a granular level. Users can analyze costs per model, user, and team, providing full transparency essential for effective budgeting and financial planning in LLM operations.

Compliance Ready

Fallom is built with compliance in mind, offering complete audit trails that help organizations meet regulatory requirements such as the EU AI Act, SOC 2, and GDPR. This feature ensures that all interactions with LLMs are logged and traceable, thereby enhancing organizational accountability.

Session Tracking

The session tracking feature allows users to group traces by session, user, or customer, providing comprehensive context for each LLM interaction. This capability is crucial for understanding user behavior and refining LLM performance based on real-world usage.

Use Cases

Agenta

Collaborative Prompt Development

In teams where multiple stakeholders are involved, Agenta facilitates collaborative prompt development by providing a shared workspace. This enables product managers, developers, and domain experts to work together effectively, improving the quality of prompts.

Rigorous Evaluation Processes

Agenta is ideal for organizations that require rigorous evaluation processes. By automating evaluations and integrating domain expert feedback, teams can ensure that their LLMs meet high standards before deployment, reducing the risk of errors in production.

Debugging and Troubleshooting

When issues arise in production, Agenta’s observability tools help teams trace failures to their source. This capability allows for more efficient debugging, as you can pinpoint problems quickly and take corrective action.

Rapid Iteration of LLMs

For teams focused on rapid iteration, Agenta provides the tools necessary to test and compare various prompts and models in real-time. This accelerates the development cycle, allowing businesses to bring reliable AI features to market faster.

Fallom

Debugging LLM Workflows

Fallom is invaluable for teams debugging LLM workflows. By providing detailed insights into every call and its performance, users can quickly identify bottlenecks and optimize their models for better efficiency.

Cost Management

Organizations can leverage Fallom's cost attribution feature to manage their LLM-related expenses effectively. By tracking costs per user and model, teams can make informed decisions about resource allocation and budget planning.

Ensuring Compliance

For companies operating under strict regulatory frameworks, Fallom's compliance-ready features are essential. Users can maintain audit trails and ensure proper consent tracking, safeguarding their operations against potential legal pitfalls.

Performance Evaluation

Fallom enables organizations to run evaluations on LLM outputs, ensuring quality and accuracy before deployment. By analyzing metrics such as accuracy, relevance, and hallucination rates, teams can refine their models to meet high standards.

Overview

About Agenta

Agenta is an open-source LLMOps platform designed as a comprehensive solution for teams developing large language model (LLM) applications. It addresses the chaos often associated with LLM development by centralizing disparate workflows into a structured and collaborative environment. With Agenta, developers, product managers, and domain experts can come together, enhancing communication and efficiency. The platform provides integrated tools for prompt management, evaluation, and observability, transforming the LLM development process into a systematic engineering discipline. By eliminating guesswork and silos, Agenta helps teams ship reliable AI features with confidence. If your organization has been struggling with the unpredictability of LLMs and disjointed workflows, Agenta offers the infrastructure needed to streamline development and foster collaboration.

About Fallom

Fallom is a cutting-edge AI-native observability platform specifically designed for large language models (LLMs) and agent workloads. As organizations increasingly rely on LLMs to drive their operations, the need for comprehensive visibility into these systems has never been greater. Fallom addresses this need by providing detailed insights into every LLM call made in production, allowing teams to trace end-to-end processes that include prompts, outputs, tool calls, tokens, latency, and associated costs. Tailored for developers, data scientists, and compliance officers, Fallom not only helps monitor LLM operations in real time but also accelerates debugging and improves performance insights. Its rich context around sessions, users, and customers, combined with robust enterprise features such as audit trails and consent tracking, makes Fallom indispensable for organizations aiming to ensure compliance and optimize their LLM deployments. With an OpenTelemetry-native SDK, teams can set up monitoring in under five minutes, making real-time usage tracking and cost attribution a seamless and efficient process.

Frequently Asked Questions

Agenta FAQ

What makes Agenta different from other LLM tools?

Agenta stands out by providing a comprehensive, open-source platform that centralizes workflows, enhances collaboration, and applies LLMOps best practices. This structured approach minimizes guesswork and maximizes reliability.

Is Agenta suitable for small teams?

Absolutely. Agenta is designed to cater to teams of all sizes, from small startups to large enterprises. Its collaborative features and centralized management make it particularly useful for teams looking to streamline their LLM development processes.

Can Agenta integrate with existing tools?

Yes, Agenta seamlessly integrates with various frameworks and models, including LangChain and OpenAI. This flexibility allows teams to leverage their existing tech stack while benefiting from Agenta's powerful features.

Is there a community for Agenta users?

Yes, Agenta boasts an active community where users can ask questions, share ideas, and collaborate on projects. Joining the community can help you get the most out of Agenta and connect with other AI builders.

Fallom FAQ

What types of organizations benefit from using Fallom?

Fallom is designed for a wide range of organizations, including those in regulated industries like finance and healthcare, as well as tech companies utilizing LLMs for various applications. Its features cater to developers, data scientists, and compliance teams.

How fast can I set up Fallom for my LLM monitoring needs?

Setting up Fallom is incredibly quick, with the OpenTelemetry-native SDK allowing users to begin monitoring their LLMs in under five minutes. This rapid setup is ideal for teams looking to implement observability without extensive overhead.

What kind of data does Fallom track during LLM calls?

Fallom tracks a variety of data points during LLM calls, including input prompts, output responses, tool calls, token usage, latency, and cost associated with each interaction. This comprehensive data helps teams analyze performance and optimize their deployments.

How does Fallom ensure user privacy while capturing data?

Fallom includes a privacy mode that allows organizations to disable content capture for sensitive data while still maintaining telemetry. This feature ensures compliance with privacy regulations and protects user confidentiality in LLM interactions.

Alternatives

Agenta Alternatives

Agenta is an open-source platform designed to help teams build and manage reliable LLM applications, serving as a mission control for LLMOps. It centralizes the chaotic process of developing AI features, enabling collaboration among developers, product managers, and domain experts. Users often seek alternatives to Agenta due to various factors such as pricing, specific feature sets, or compatibility with existing workflows and platforms. When choosing an alternative, it's important to consider the platform's ability to facilitate experimentation, provide robust evaluation tools, and support seamless collaboration across team members. Ensuring that the alternative aligns with your team's specific needs and workflows can make a significant difference in the development process.

Fallom Alternatives

Fallom is an AI-native observability platform specifically designed for large language models (LLMs) and agent workloads. It excels in providing real-time insights into every LLM call made in production, offering developers and data scientists the ability to track and debug their AI operations efficiently. Users often seek alternatives to Fallom for various reasons, including pricing considerations, specific feature sets, or compatibility with their existing platforms. When looking for an alternative, it’s essential to evaluate factors such as the depth of observability, ease of integration, and compliance features. Consider whether the alternative can provide real-time monitoring and cost management, as these are critical for optimizing LLM deployments and ensuring regulatory adherence.