Agenta vs OpenMark AI

Side-by-side comparison to help you choose the right tool.

Agenta is an open-source platform that streamlines LLM development, enabling teams to collaborate and build reliable.

Last updated: March 1, 2026

OpenMark AI lets you effortlessly benchmark over 100 LLMs on your specific tasks, comparing cost, speed, quality, and stability in real-time.

Last updated: March 26, 2026

Visual Comparison

Agenta

OpenMark AI

Feature Comparison

Agenta

Centralized Prompt Management

Agenta allows you to centralize all your prompts, evaluations, and traces in one platform. This eliminates the confusion of scattered files and improves accessibility for all team members, ensuring everyone is on the same page.

Automated Evaluations

With Agenta, you can create a systematic process to run experiments, track results, and validate every change made to your LLMs. This feature replaces guesswork with evidence by providing automated evaluations that help you understand what changes impact performance.

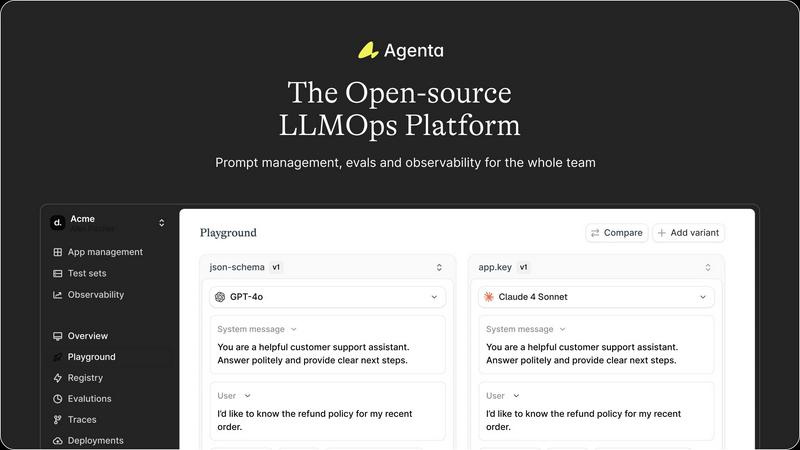

Unified Playground

The platform includes a unified playground where you can compare prompts and models side-by-side. This feature is invaluable for identifying the best-performing prompts and models, allowing for quick iterations and improvements.

Real-time Observability

Agenta provides tools for monitoring production systems and tracing every request. This feature allows you to gather user feedback efficiently, debug your AI systems, and detect regressions, ensuring a smoother user experience.

OpenMark AI

User-Friendly Task Configuration

OpenMark AI offers a simple yet powerful task configuration interface, allowing users to describe their benchmarking tasks effortlessly. Whether you're looking to test for classification, translation, or data extraction, this feature simplifies the setup process, making it accessible even for those with minimal coding experience.

Comprehensive Model Comparison

With OpenMark AI, you can test over 100 different models concurrently, giving you a broad perspective on which AI solution fits your specific needs. This comprehensive comparison allows users to evaluate performance metrics like accuracy and stability under various conditions, ensuring that you select the best model for your application.

Real-Time API Call Results

Say goodbye to outdated metrics and marketing fluff. OpenMark AI provides real-time results from actual API calls to models, ensuring that you are working with the most accurate performance data. This feature allows teams to assess how each model performs under identical conditions, enabling better-informed decisions.

No Setup Hassles

One of the standout aspects of OpenMark AI is its seamless user experience, which eliminates the need for API key configurations and complex setups. Users can dive straight into benchmarking without worrying about technical barriers, making it an ideal choice for teams looking to integrate LLMs quickly and efficiently.

Use Cases

Agenta

Collaborative Prompt Development

In teams where multiple stakeholders are involved, Agenta facilitates collaborative prompt development by providing a shared workspace. This enables product managers, developers, and domain experts to work together effectively, improving the quality of prompts.

Rigorous Evaluation Processes

Agenta is ideal for organizations that require rigorous evaluation processes. By automating evaluations and integrating domain expert feedback, teams can ensure that their LLMs meet high standards before deployment, reducing the risk of errors in production.

Debugging and Troubleshooting

When issues arise in production, Agenta’s observability tools help teams trace failures to their source. This capability allows for more efficient debugging, as you can pinpoint problems quickly and take corrective action.

Rapid Iteration of LLMs

For teams focused on rapid iteration, Agenta provides the tools necessary to test and compare various prompts and models in real-time. This accelerates the development cycle, allowing businesses to bring reliable AI features to market faster.

OpenMark AI

Model Selection for AI Features

When developing new AI features, teams can leverage OpenMark AI to compare different models, ensuring that they choose the one that best meets their requirements in terms of performance and cost. This process minimizes the risk of deploying a suboptimal model and enhances overall project success.

Pre-Deployment Validation

OpenMark AI serves as a valuable tool for validating models before they go live. By running benchmarks and analyzing performance metrics, teams can confirm that the selected model will deliver consistent and reliable results, reducing the likelihood of post-deployment issues.

Cost Efficiency Assessment

For organizations focused on maintaining budget constraints, OpenMark AI allows for a detailed analysis of cost per API call. This insight helps teams prioritize models that offer the best value for their specific tasks, ultimately leading to smarter financial decisions.

Consistency Testing in Outputs

OpenMark AI is essential for teams that require consistent output from language models. By benchmarking models against the same tasks multiple times, users can gauge how stable model performance is over repeated runs, ensuring they choose a model that delivers reliable results.

Overview

About Agenta

Agenta is an open-source LLMOps platform designed as a comprehensive solution for teams developing large language model (LLM) applications. It addresses the chaos often associated with LLM development by centralizing disparate workflows into a structured and collaborative environment. With Agenta, developers, product managers, and domain experts can come together, enhancing communication and efficiency. The platform provides integrated tools for prompt management, evaluation, and observability, transforming the LLM development process into a systematic engineering discipline. By eliminating guesswork and silos, Agenta helps teams ship reliable AI features with confidence. If your organization has been struggling with the unpredictability of LLMs and disjointed workflows, Agenta offers the infrastructure needed to streamline development and foster collaboration.

About OpenMark AI

OpenMark AI is a cutting-edge web application designed for task-level benchmarking of large language models (LLMs). This innovative tool allows users to articulate testing parameters in plain language, enabling simultaneous evaluation of multiple models within a single session. OpenMark AI provides invaluable insights into cost per request, latency, scored quality, and stability through repeated runs, ensuring that users can identify variance in model performance rather than relying on a single fortunate output.

Tailored for developers and product teams, OpenMark AI streamlines the model selection process before launching AI features. Its hosted benchmarking service eliminates the hassle of configuring various API keys, allowing users to focus on their testing without the technical overhead. By offering side-by-side results derived from actual API calls, OpenMark AI empowers users to make informed decisions based on real data rather than marketing claims. This platform is particularly beneficial for those who prioritize cost efficiency and consistency in output quality, making it an essential tool for pre-deployment assessments in AI projects.

Frequently Asked Questions

Agenta FAQ

What makes Agenta different from other LLM tools?

Agenta stands out by providing a comprehensive, open-source platform that centralizes workflows, enhances collaboration, and applies LLMOps best practices. This structured approach minimizes guesswork and maximizes reliability.

Is Agenta suitable for small teams?

Absolutely. Agenta is designed to cater to teams of all sizes, from small startups to large enterprises. Its collaborative features and centralized management make it particularly useful for teams looking to streamline their LLM development processes.

Can Agenta integrate with existing tools?

Yes, Agenta seamlessly integrates with various frameworks and models, including LangChain and OpenAI. This flexibility allows teams to leverage their existing tech stack while benefiting from Agenta's powerful features.

Is there a community for Agenta users?

Yes, Agenta boasts an active community where users can ask questions, share ideas, and collaborate on projects. Joining the community can help you get the most out of Agenta and connect with other AI builders.

OpenMark AI FAQ

What types of models can I benchmark with OpenMark AI?

OpenMark AI supports a wide range of models, allowing users to test over 100 different LLMs tailored to various tasks, including classification, translation, and more.

Do I need to set up API keys to use OpenMark AI?

No, OpenMark AI eliminates the need for individual API key setups. Users can begin benchmarking immediately without the technical overhead of configuring multiple keys.

How does OpenMark AI ensure the accuracy of comparison results?

OpenMark AI provides side-by-side results based on real API calls to models, ensuring that you receive accurate and up-to-date performance data rather than relying on cached or promotional figures.

Is OpenMark AI suitable for non-developers?

Absolutely! The user-friendly interface and no-code approach make OpenMark AI accessible to non-developers, allowing anyone interested in AI model benchmarking to participate without requiring extensive technical knowledge.

Alternatives

Agenta Alternatives

Agenta is an open-source platform designed to help teams build and manage reliable LLM applications, serving as a mission control for LLMOps. It centralizes the chaotic process of developing AI features, enabling collaboration among developers, product managers, and domain experts. Users often seek alternatives to Agenta due to various factors such as pricing, specific feature sets, or compatibility with existing workflows and platforms. When choosing an alternative, it's important to consider the platform's ability to facilitate experimentation, provide robust evaluation tools, and support seamless collaboration across team members. Ensuring that the alternative aligns with your team's specific needs and workflows can make a significant difference in the development process.

OpenMark AI Alternatives

OpenMark AI is a powerful web application designed for benchmarking over 100 large language models (LLMs) based on specific tasks. It enables users to evaluate various models in terms of cost, speed, quality, and stability, providing a comprehensive view of performance rather than relying on single outputs. This tool primarily serves developers and product teams who require thorough comparisons to make informed decisions before implementing AI features. Users often seek alternatives to OpenMark AI due to factors such as pricing, specific feature sets, or platform compatibility. It's essential to consider what aspects matter most for your use case, whether that’s the breadth of model support, ease of use, or any unique functionalities that align with your project’s needs. When selecting an alternative, prioritize tools that deliver accurate benchmarking, transparent pricing, and consistent performance metrics, ensuring they fit seamlessly into your development workflow.